In the high-stakes US SaaS landscape, “traffic” is a vanity metric; “qualified pipeline” is the only currency that matters.

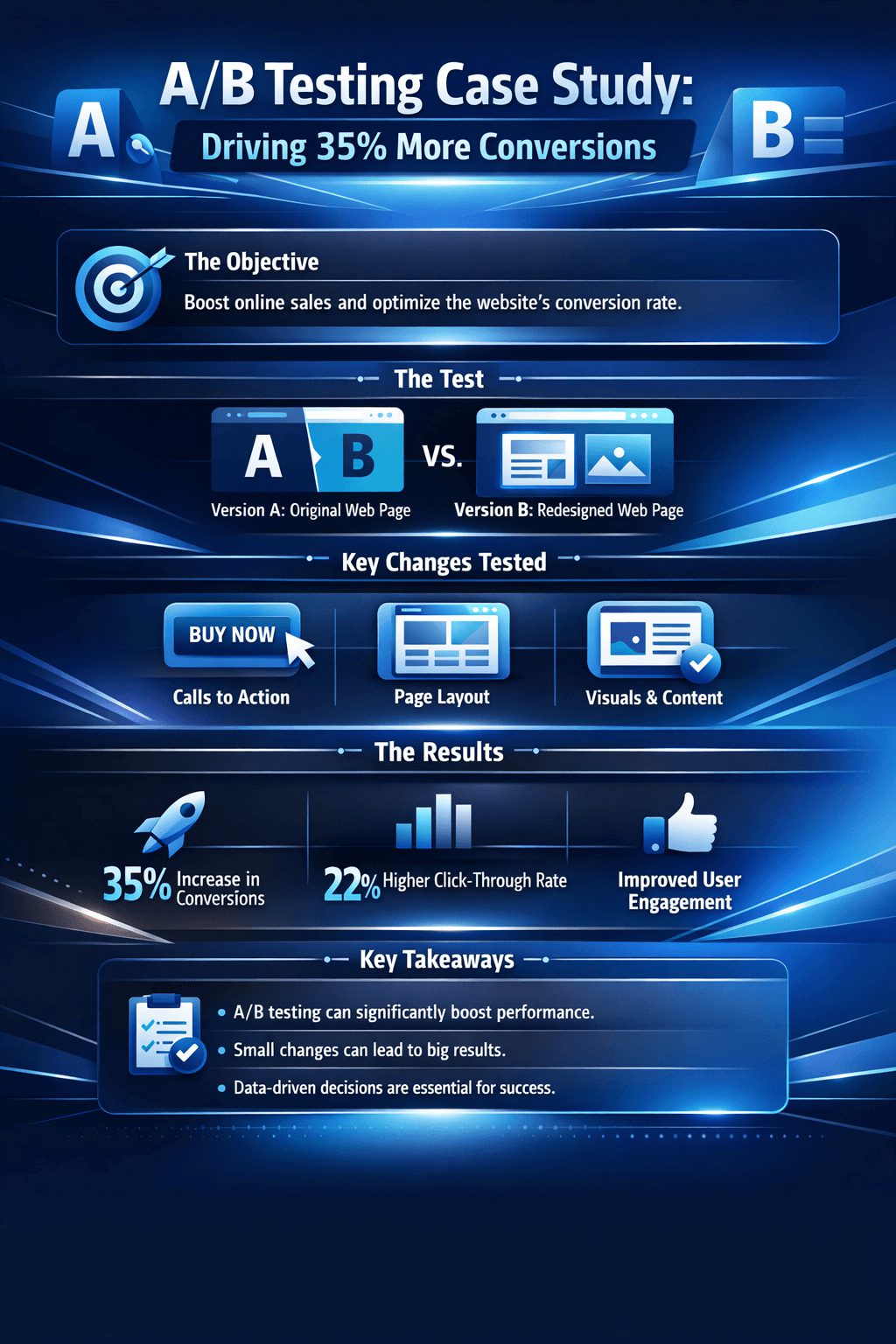

When a Silicon Valley-based enterprise provider faced stagnant conversion rates despite a multi-million dollar ad spend, SIS Międzynarodowy was brought in to re-engineer the digital experience. By moving beyond surface-level UI changes and implementing a deep-funnel A/B testing framework, we identified the psychological friction points preventing C-suite engagement.

This study explores how a shift toward “Authority-Driven Design” and frictionless data capture transformed a 1.4% conversion rate into a $3.8M revenue engine.

Table of Contents

A/B Testing Case Study: How Industrial Leaders Quantify Commercial Decisions

Industrial buyers commit capital based on evidence, not intuition. The same standard applies to the decisions that shape how products reach them.

An A/B testing case study, when designed with field discipline, isolates the commercial variable that moves a metric and quantifies the lift. For Fortune 500 industrial firms, this method has migrated from digital marketing into merchandising, channel programs, technical sales enablement, and aftermarket pricing. The shift reflects a harder demand from procurement-led buyers: prove the program works before scaling it.

This article examines how leading industrial and distribution-driven businesses structure controlled experiments that survive CFO scrutiny, and where the design choices separate signal from noise.

Why Controlled Experiments Now Drive Industrial Commercial Strategy

Industrial commercial leaders historically defaulted to pre/post comparisons or single-market pilots. Both approaches confound the variable of interest with seasonality, sales rep effort, weather, and competitor activity. A properly matched A/B design strips out these confounders by running the control and treatment cells in parallel across comparable outlets, territories, or accounts.

The pressure to adopt this rigor comes from three places. Procurement organizations now demand total cost of ownership models with verifiable inputs. Trade spend and channel program budgets face quarterly justification. Predictive maintenance sizing, aftermarket revenue strategy, and installed base analytics all depend on segment-level lift estimates that pre/post measurement cannot deliver.

SIS International Research has observed across B2B expert interviews in industrial distribution that the commercial programs surviving budget cycles are those tied to a controlled experiment with documented matched-cell design, not those defended by anecdotal field reports.

Designing an A/B Testing Case Study That Holds Up Under Scrutiny

The architecture of a defensible experiment rests on five decisions made before any field activity begins.

Unit of randomization. The unit must match the level at which the intervention is delivered. For a merchandising package deployed at the outlet, the outlet is the unit. For a technical training program delivered to a sales team, the team is the unit. Mismatched randomization inflates false positives.

Matched-cell construction. Outlets, territories, or accounts in the treatment cell must be paired with controls on baseline volume, format, geography, and competitive density. Unmatched cells force statistical adjustments that erode credibility with finance reviewers.

Sample size and power. The minimum detectable effect must be set against the business case. A program promising eight percent lift cannot be evaluated with a sample sized to detect fifteen.

KPI hierarchy. One primary KPI, two or three secondary KPIs, and a fixed list of guardrail metrics. Industrial experiments fail credibility tests when teams hunt through dozens of metrics for a winning narrative.

Measurement instrument. Exit interviews, scanner data, CRM-logged orders, or shipment data each carry distinct biases. The instrument must be specified in the protocol, not chosen after results land.

A Field Example: Merchandising Effectiveness in Distributed Retail

Consider a multinational consumer brand evaluating point-of-sale materials across thousands of independent outlets in Southeast Asia. The brand wanted to know which merchandising package, permanent fixtures versus non-permanent posters and stickers, drove higher brand recall and purchase intent in small format trade.

In structured field work conducted by SIS across sari-sari stores and wholesaler outlets in the Philippines, exit interviews at matched outlet pairs measured aided and unaided recall, with cell sizes calibrated to detect a meaningful lift at conventional confidence thresholds. The matched-pair design controlled for outlet volume tier, urban density, and competitor presence within a defined catchment radius.

The structural lessons translate directly to industrial contexts. Replace sari-sari outlets with industrial distributors. Replace POSM with co-branded technical literature, counter day programs, or distributor portal redesigns. The randomization logic, matched-cell construction, and exit-interview discipline hold.

Where Industrial A/B Tests Generate the Highest Returns

Five application areas consistently produce experiments with quantifiable commercial value.

| Application | Treatment Variable | Primary KPI |

|---|---|---|

| Distributor program design | Co-op funding tier structure | Sell-through volume per distributor |

| Technical sales enablement | Configurator tool versus PDF specs | Quote-to-order conversion |

| Aftermarket pricing | Bundle discount structure | Attach rate on consumables |

| Field service routing | Predictive versus scheduled dispatch | First-time fix rate |

| Trade show ROI | Booth configuration and lead capture | Cost per qualified opportunity |

Source: SIS International Research

The pattern across these applications is consistent. The variables that look smallest in isolation, a one-page versus three-page technical spec, a fifteen versus twenty percent volume rebate threshold, generate the largest verified lift when tested cleanly. Senior commercial leaders frequently underweight these variables because they lack a measurement instrument that isolates them.

The Five-Decision Framework for Industrial Experimentation

SIS uses a five-decision framework to evaluate whether an A/B testing case study will produce evidence that survives review.

- Decision intent. What action will the result authorize or block?

- Effect size. What lift is required to justify the program economically?

- Cell architecture. Are the units of randomization and matching variables specified?

- Instrument integrity. Is the measurement method robust to fieldwork drift?

- Read-out protocol. Who interprets results, against what pre-registered hypotheses?

Programs that pass all five decisions consistently produce findings that hold up in subsequent national rollouts. Programs that skip any one of them tend to generate findings that reverse upon scaling.

Common Design Choices That Strengthen Industrial Experiments

Three design choices distinguish the experiments that lead to confident scaling decisions.

Pre-register the analysis plan. The hypothesis, primary KPI, and statistical test are documented before fieldwork begins. This eliminates retrospective metric selection and protects the credibility of the read-out.

Run guardrail metrics in parallel. A merchandising lift that improves recall but reduces basket size is a net loss. Industrial programs that improve quote conversion but degrade margin are equally suspect. Guardrails make tradeoffs visible.

Match cells on competitive context. In industrial markets where two or three competitors hold majority share, regional competitive intensity moves the dependent variable as much as the treatment. Matched-cell design must include competitor presence as a stratifying variable.

Building Experimentation Into Commercial Operating Cadence

SIS International’s proprietary research across industrial channel programs indicates that organizations running four or more controlled commercial experiments per year develop a measurable advantage in trade spend allocation efficiency, with budget reallocation cycles tightening from annual to quarterly.

The advantage compounds. Each experiment produces a verified effect size, which improves the prior assumptions feeding the next experiment. Over three to five cycles, the organization builds a proprietary effect-size library that competitors cannot replicate without equivalent field investment. This library, more than any single A/B testing case study, is the durable commercial asset.

For industrial leaders evaluating where to apply controlled experimentation first, the highest-yield candidates are programs with annual spend above five million dollars, multiple deployment variants in active discussion, and a measurement instrument already in place at the outlet, distributor, or account level.

O firmie SIS International

SIS Międzynarodowy oferuje badania ilościowe, jakościowe i strategiczne. Dostarczamy dane, narzędzia, strategie, raporty i spostrzeżenia do podejmowania decyzji. Prowadzimy również wywiady, ankiety, grupy fokusowe i inne metody i podejścia do badań rynku. Skontaktuj się z nami dla Twojego kolejnego projektu badania rynku.